In a previous topic, I have described how to deploy a VMware vSAN cluster. VMware vSAN enables to create a hyperconverged cluster in the vSphere environment. The last time we saw how to deploy this cluster by configuring, ESXi hosts, distributed switch and the storage. Now that the cluster is working, we can deploy VMs inside the vSAN datastore. To ensure the resilience of the VM storage in case of a fault (a storage device issue, an ESX host down and so on) and to ensure performance, we have to leverage VM Storage Policies.

To be deployed in the vSAN cluster, the VM must be bound to a VM Storage Policy. This policy enables to configure per VM the following settings:

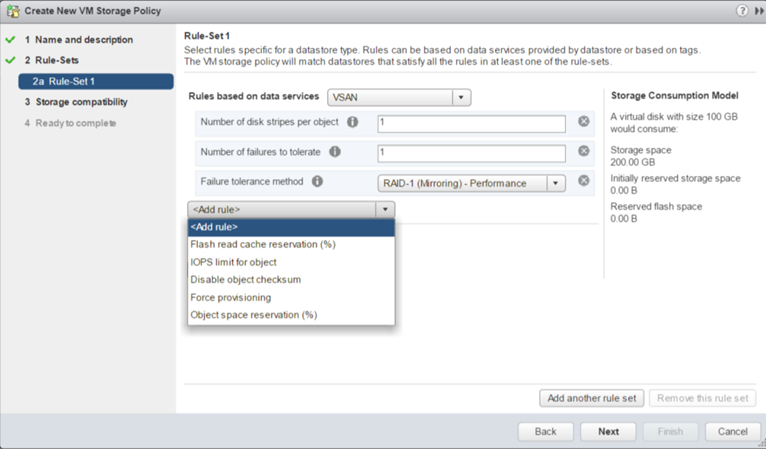

- IOPS limit for object: This setting enables to configure the IOPS limit of an object as a VMDK

- Flash read cache reservation (%): This setting is useful only in hybrid vSAN configuration (SSD + HDD). It enables to define the amount of flash storage capacity reserved for read IO of the storage object such as VMDK.

- Disable object checksum: vSAN provides a system to check if an object such as VMDK is corrupted to resolve automatically the issue. By default, this verification is made once a year. For performance reasons, you can disable this with this setting (not recommended).

- Force provisioning: VM Storage Policy verifies that the datastore is compliant with rules defined in the policy. If the datastore is not compliant, the VM is not deployed. If you choose to enable Force provisioning, even if the policy is not compliant with the datastore, the VM is deployed.

- Object space reservation: By default, the VMDK is deployed in thin provisioning in vSAN datastore because this value is set to 0%. If you change this value to 100%, the VMDK is deployed in thick provisioning. If you set the value to 50%, half of the VMDK capacity is reserved. If deduplication is enabled, you can set this value only to 0% or 100% but not between.

- Number of disk stripes per object: this setting defines the number of stripes for a VM object such as the VMDK. By default, the value is set to 1. If you choose for example two stripes, the VMDK is stripped across two physical disks. Change this value only if you have performance issues and you have identified that it’s coming from a lack of IOPS on the physical disk. This setting has no impact on the resilience.

- Number of failures to tolerate (FTT): this setting specifies the resilience of the object inside the vSAN and the number of faults tolerated. If the value specified is 1, the object can support a single failure. If the value is set to 2, the object can support two failures and so on. More the number of failures to tolerate is high, more the object will consume storage for resilience.

- Failure tolerance method: This setting enables you to specify either RAID-1 or RAID5/6. Then the RAID system works through the network and is spread across the vSAN nodes. This setting works in conjunction of FTT. If I assume you set the number of FTT to 1, the RAID1 configuration requires at least three nodes and the RAID 5 requires 4 nodes (We will go deeper in the next section about this aspect).

RAID Level, FTT and stripes

In vSAN environment, a storage object as a VMDK is ready when more than half of its components is alive (this is the quorum). With this statement, this means that in RAID 1 configuration, if I lose a replica, the VMDK is not ready (50% of components are down). It is not good for the high availability J. This is why VMware has introduced a witness system. The witness has a vote like the components. So the witness takes part in the quorum. The below schema presents a VMDK deployed in RAID 1 with FTT = 1.

In this example, even if I lose a node, the VMDK is still ready. If I lose two nodes, the VMDK is not ready anymore. So the VMDK is resilient to one failure (FTT = 1). If I choose to set the FTT to 2, an additional component will be deployed. Each component’s size is equal to the VMDK size. This is why, more you increase the FTT, more the data consumption dedicated to resilience will be high.

The number of disk stripes affects also the number of node required. Each stripe will be also a component. If I take again the above example and this time I set the number of stripes to 2, it will have no impact of the required number of nodes:

In the above example, I have 6 components and one witness. If I lose the node 1 or 2, I will have 3 components and the witness remaining (the majority). So the VMDK will be ready.

Now I’m implementing a VM Storage Policy with RAID 1, FTT = 2 and the number of stripes set to 2:

Despite I have added the fourth node in the vSAN cluster for the third replica (component 3), the above solution is not good. In this solution I have a total of 9 components. What happens if I lose Nodes 1 and Nodes 2? Just three components and the witness are remaining. So the quorum is not reached and the VMDK is not ready. Below, the schema introduces the right design for a RAID 1 with FTT = 2 and the number of stripes set to 2. And yes, you need 5 nodes to achieve this configuration.

Play with VM Storage Policy

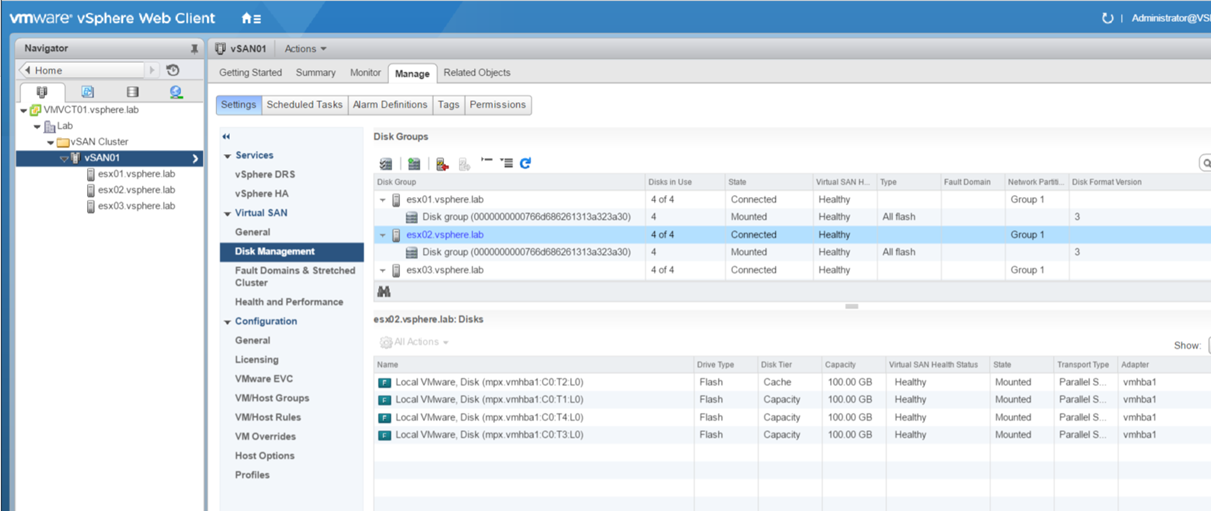

Last time I have implemented a vSAN cluster composed of three nodes. Each node has four disks (one for flash, and three for HDD).

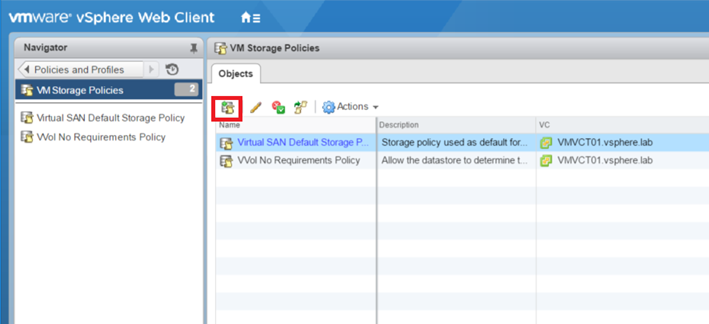

The default VM Storage policy is called Virtual SAN Default Storage Policy. But you are encouraged to create your own and not use this one. To create a VM Storage Policy, navigate to Policies and Profiles and click on VM Storage Policies. Next, click on the button circled in red in the below screenshot.

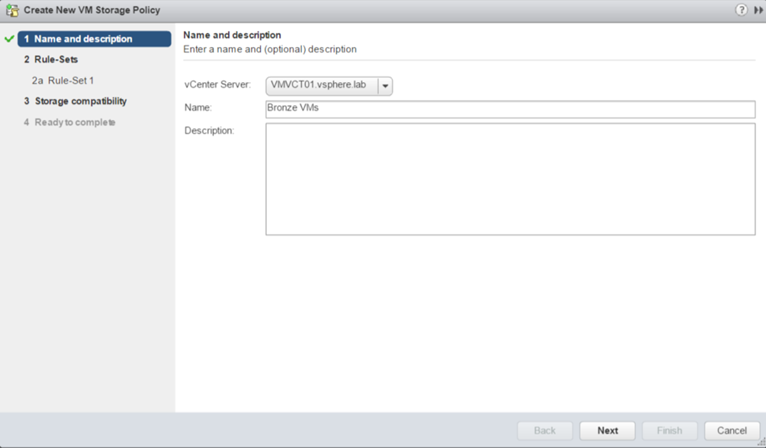

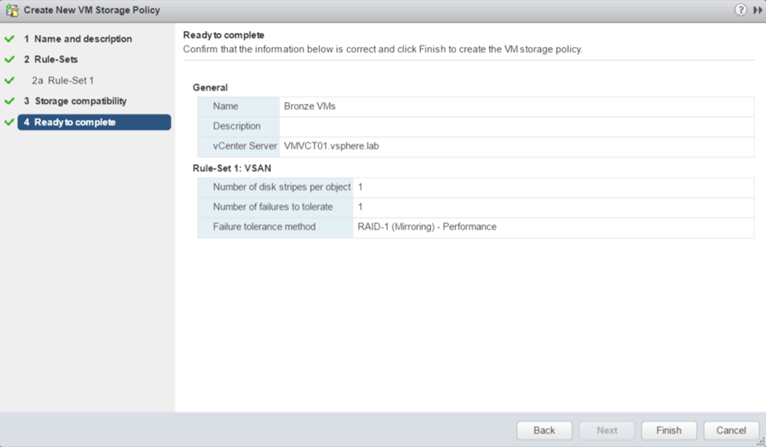

Then give a name to the VM Storage Policy. I have called mine Bronze VMs.

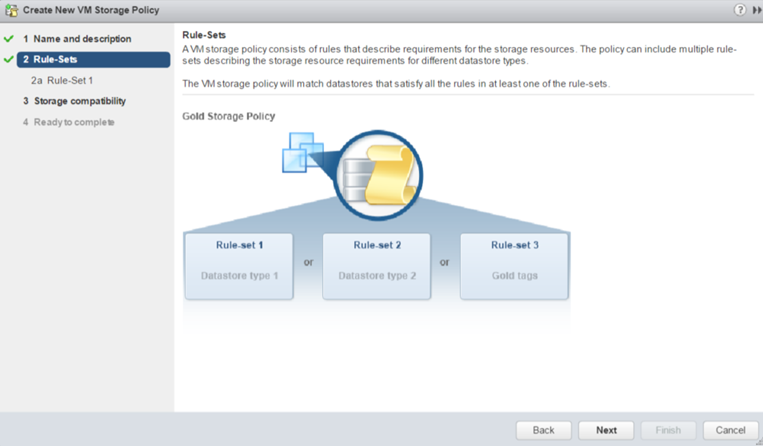

The below screenshot describes you that a VM Storage Policy can include multiple rule set to establish the storage requirements.

For this example, I set the failure tolerance method to RAID 1, the FTT to 1 and the number of disk stripes to 1 (this will deploy the same example described in the first schema of the last section).

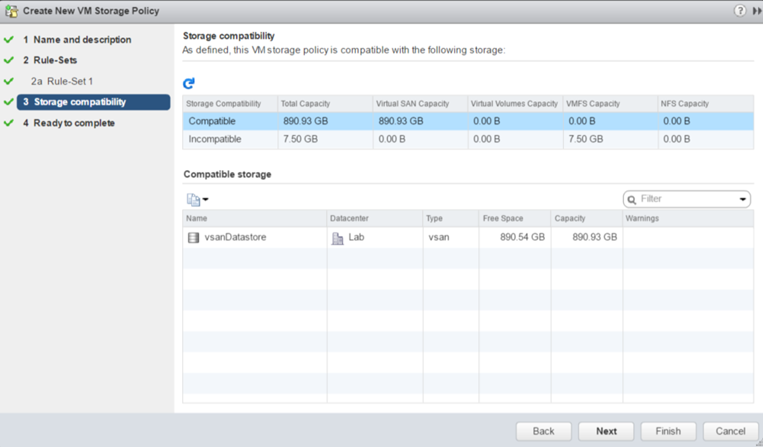

Then the wizard shows me the storage which are compatible with the VM storage policy.

To finish, the wizard shows you the summary of your VM storage policy. Click on finish to create the policy.

Deploy a VM in vSAN

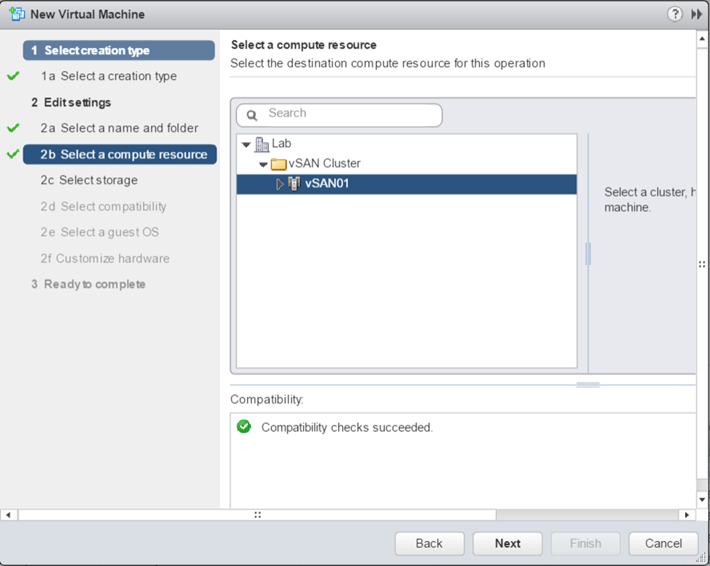

Once the VM storage policy is created, you can deploy a VM. When you create the VM, select the vSAN cluster has shown in the below screenshot.

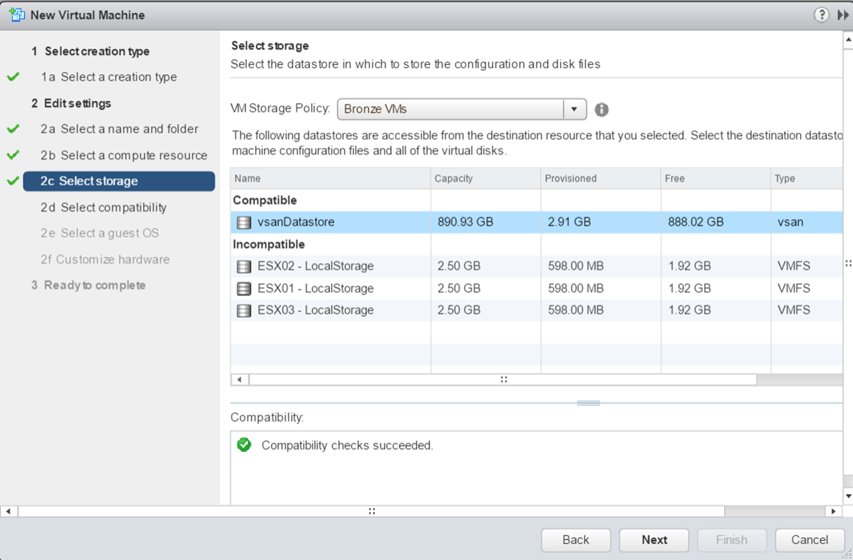

Then select the VM Storage policy and a compatible datastore as below.

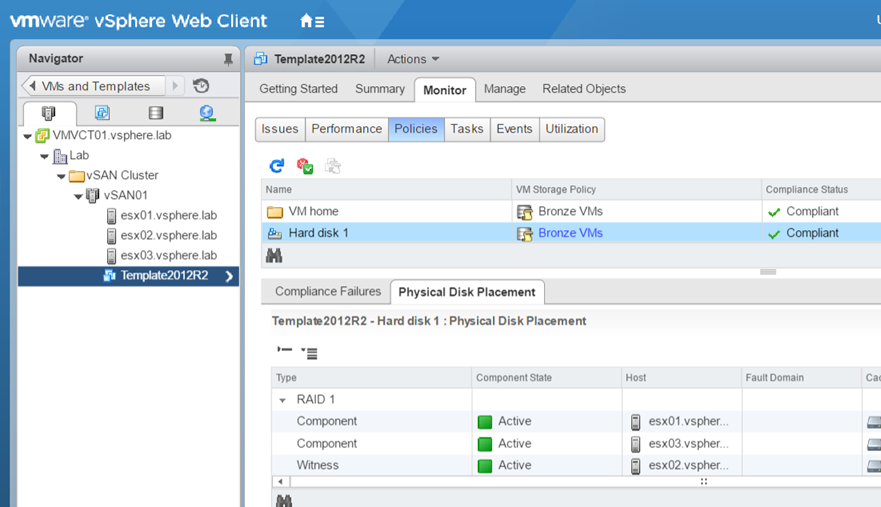

Once the VM is deployed, you can navigate to the VM, and Monitor. Then select Policies. As you can see below, I have two components spread across two nodes and one witness hosted in another node.